Iteration 4 · GenAI Works Cohort · September 2025

C.5.1 Institutional context

Iteration 4 was the second compressed cohort of my course, delivered September 8 through September 12, 2025, through partnership with GenAI Works. The workshop was delivered via the GenAI Works YouTube channel rather than through a closed institutional Zoom session, opening the audience to global online attendees during live broadcast. The structure preserved the Monday-through-Friday day-by-day format that Iteration 3 had established.

This iteration extended the distillation phase of my pioneer practice and is the iteration with the most extensive recorded teaching delivery in the corpus. The five day-by-day transcripts (TR-4.D1 through TR-4.D5) together total approximately fifty-five thousand words of verbatim teaching delivery.

C.5.2 The same template as Iteration 3 with date headers changed

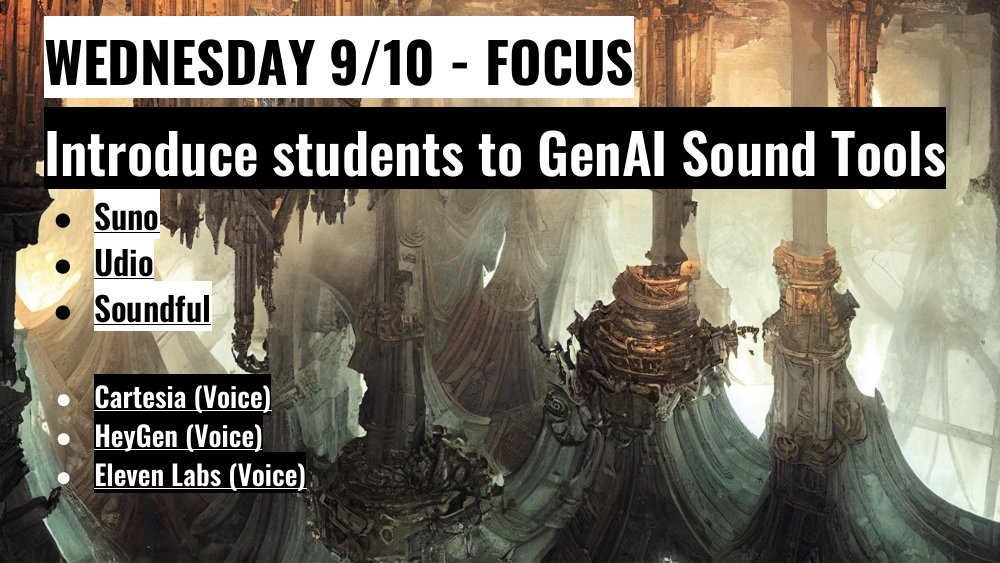

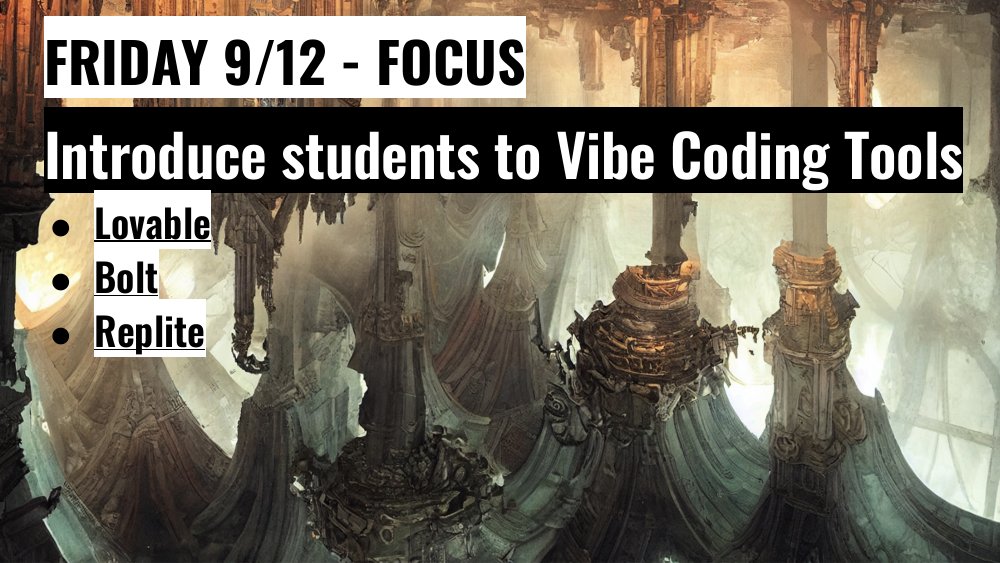

The Iteration 4 workshop deck (DK-4) preserves the structure of the Iteration 3 deck (DK-3). The deck carries twenty-two slides with the same day-by-day organization (Monday image, Tuesday video, Wednesday audio, Thursday research, Friday human-centered AI), the same topical structure within each day, and only the date headers updated to reflect the September 8 through September 12 schedule.

The template stability between cohorts is the most concrete evidence of the compression-as-curriculum-maturation pattern (§D.3). The same workshop runs again, with the same architecture and the same daily topics, for a new audience three weeks after Cohort 1 concluded. The pedagogical structure had stabilized through Iteration 3's first delivery and operated as a working artifact in Iteration 4. \autoref{fig:dk4-workshop-gallery} samples DK-4 across the same five days as the DK-3 gallery in §C.4.3; the two galleries should be read side-by-side to see how the template held its shape between cohorts.

C.5.3 YouTube delivery and global audience visibility

The GenAI Works YouTube delivery channel made the workshop visible to a globally-distributed audience during live broadcast. We originally configured the workshop on Streamyard, but the platform could not handle the registered audience capacity, and we switched to streaming the live broadcast on YouTube Live to reach the live audience at scale. The audience data exceed the Cohort 1 figures substantially: the Iteration 4 Luma event page accumulated approximately 4,731 registered guests (LR-4) across the cohort's promotion window, and the Day 1 YouTube broadcast on September 8, 2025 drew 2,654 live online participants (LR-4.D1). Audience feedback collected through the Luma platform produced 256 ratings with an average of 4.2 of 5 (LF-4), a higher ratings volume than Cohort 1's 29-response Luma feedback corpus (LF-3), reflecting the substantially larger registered base.

The Day 1 transcript (TR-4.D1, 10,962 words) opens with the host Samuel Cummings welcoming participants and naming the geographies in the chat (TR-4.D1-Q1, verbatim):

"Amazing. Welcome everybody. I mean, I see so much excitement in the chat. How's everybody doing out there? ... There we go. We got um people from all over the world. I see Nigeria, Denver, UK, Costa Rica. I mean, what a time to be alive, to be here virtually."

The opening also names me by name and introduces my dissertation chair Tom Yeh as a co-host from CU Boulder (TR-4.D1-Q2, verbatim):

"So, uh we have Tom Yei. welcome from uh you know University of Colorado uh Boulder. We have Lissa Schwarz as well is going to be our main host today."

(Speech-recognition errors in the transcript include "Tom Yei" for Tom Yeh and "Lissa Schwarz" for Larissa Schwartz; the names are correctly identifiable in context.)

The audience identification is performed live in the workshop format because GenAI Works prompts each attendee to identify themselves in the chat. This is a piece of evidence for the multi-channel teaching practice finding (§D.4): the same four-theme curriculum that had reached approximately twenty-five Boulder CTD undergraduates in Iteration 1 now reached a globally-distributed online audience in Iteration 4, with the architecture preserved.

C.5.4 Day-by-day transcript analysis

The five day-by-day transcripts are the principal artifact of Iteration 4. Their volumes are:

| ID | Day | Topic | Approximate word count |

|---|---|---|---|

| TR-4.D1 | Monday | Image generation | 10,962 |

| TR-4.D2 | Tuesday | Video generation | 11,395 |

| TR-4.D3 | Wednesday | Audio generation | 11,599 |

| TR-4.D4 | Thursday | Research tools | 9,921 |

| TR-4.D5 | Friday | Human-centered AI and Vibe Coding | 11,276 |

Total: approximately 55,000 words of verbatim teaching delivery across the five days.

The transcripts capture my live instructional voice in ways that the slide decks and the Luma feedback survey for Iteration 3 do not. They are the principal evidence base for the narrative-visibility criterion (§B.3.3) within Iteration 4 and the contemporaneous record of how the compressed workshop format actually unfolded in delivery. The transcripts carry evidence for each of the three curriculum-design principles that Chapter D elaborates.

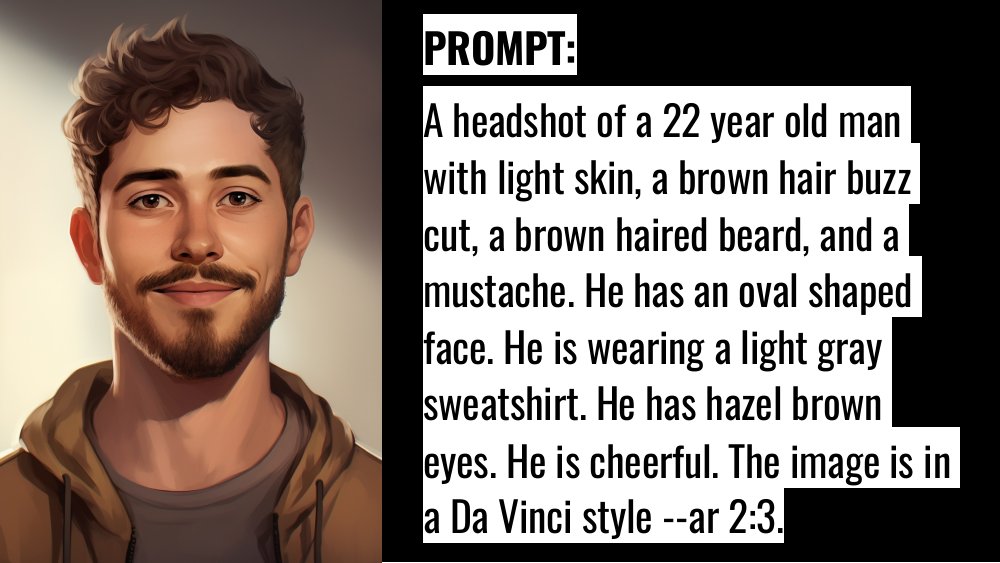

Modularity in the day-level structure (previews §D.2). Each day of Iteration 4 corresponds to a distinct curriculum module: image generation (D1), video generation (D2), audio generation (D3), research tools (D4), and human-centered AI with Vibe Coding (D5). The day-level packaging is the same modular architecture I had run across the four-theme weekly arc in Iterations 1 and 2, compressed into a five-day delivery. The transcripts let the modular structure be read as instructor-voice delivery rather than as static slide framing. Chapter D §D.2 elaborates the architectural-stability reading of this evidence; §D.2.3 elaborates the compression-as-curriculum-maturation sub-claim that the five-day cadence supports.

Learner choice instantiated tool-by-tool (previews §D.3). Across the 55,000 words I walk learners through multiple tools per topic, invite the audience to choose among them, and treat the choice itself as a learning move. The Microsoft Designer walkthrough on Day 1 (TR-4.D1-Q3) closes on the choice frame: "if you're still not getting a result that you like, then try a different tool." Chapter D §D.3 elaborates the dialogue-with-informants reading of this evidence, and §D.3.3 elaborates the multi-channel teaching practice sub-claim that the global-audience delivery surface supports.

Continuous feedback as real-time response to encounters (previews §D.4). The transcripts document the reflexive loop in action: I observe what a tool produces, name what I see, and adjust the pedagogy in the next sentence rather than in the next iteration. The TR-4.D1-Q3 passage is one explicit instance (verbatim):

"And you can also see these different hallucinations that are going on. So there aren't even bodies in these shoes. And so it's really interesting how these different tools are used. Sometimes I get some really really great image generation and it just depends on what you want to use it for, but other times there are a lot of hallucinations that you can get within these specific tools. And so once again, you just have to make sure and take a look at everything. And even if you do get a hallucination on the first time, it doesn't mean give up. You can keep inputting and inputting and inputting the prompts into into the generator and see if it changes. And so I would I would continue to keep working with the specific tool and if you're still not getting a result that you like, then try a different tool."

I name the phenomenon by its technical term (hallucination), point to a concrete example (the shoes without bodies), normalize the occurrence, and convert the encounter into an iteration practice in real time. Chapter D §D.4 elaborates this passage as evidence for the continuous-feedback principle, with hallucination-as-pedagogy treated as a side sub-claim that surfaces inside the principle rather than as the principle-level finding. The principle the transcript supports is continuous feedback; hallucination-as-pedagogy is one place where that principle becomes visible in instructor practice.

The transcripts are cited at source-level (TR-4.D1 through TR-4.D5) throughout this dissertation, with Q-IDs assigned for specific instructor-voice passages. The master evidence-table records the full set of Q-IDs in use.

C.5.5 Tom Yeh as guest from CU Boulder on Day 1

Day 1 of Iteration 4 featured my dissertation chair Tom Yeh as a guest speaker connecting from CU Boulder (visible in TR-4.D1). Yeh's appearance is one of the cross-iteration member-checking moments noted in §B.5.4: he is one of the two PIs on the AI-IRT Seed Grant (AI-PROPOSAL) that funded the broader research arc, and his presence as a guest in Iteration 2 (DeepSeek lecture, January 28, 2025) and now Iteration 4 (Day 1 guest) is partially documented in the corpus itself.

The CU-Boulder-to-global-audience pattern that Yeh's guest appearance instantiates is also a piece of evidence for the multi-channel teaching practice finding. The local institution (CU Boulder) and the global delivery channel (GenAI Works on YouTube) were brought into the same live session through the guest configuration.

C.5.6 Comparison to Cohort 1 · same structure, different delivery surface

Iteration 4 and Iteration 3 are structurally identical workshops delivered to two different audiences through two different platforms. The differences worth marking are:

| Dimension | Iteration 3 | Iteration 4 |

|---|---|---|

| Sponsoring institution | CEAS (CU Boulder College of Engineering and Applied Science) | GenAI Works (external partnership) |

| Delivery platform | Luma plus Zoom | GenAI Works YouTube Live (Streamyard was tried first but could not handle the registered audience capacity) |

| Audience composition | 65% students, primarily Boulder-and-US | Global, with Nigeria, UK, Costa Rica, and US attendees named in Day 1 transcript opening |

| Live audience size | 129 attended (LR-3) | 2,654 live Day 1 participants on YouTube Live (LR-4.D1), against 4,731 Luma registrations (LR-4) |

| Audience evaluation | Luma feedback survey, 29 responses, 4.69 of 5 average (LF-3) | Luma feedback survey, 256 ratings, 4.2 of 5 average (LF-4) |

| Recorded delivery available? | Limited | Comprehensive (five day-by-day transcripts, 55,000 words) |

| Guest speakers | Curriculum-internal only | Tom Yeh from CU Boulder on Day 1 |

The differences are substantial in audience and platform; the curricular structure is the same. The same workshop runs against two different audience-and-platform configurations.

C.5.7 What this iteration accomplished

Iteration 4 accomplished four things that complete the dissertation's evidence base.

First, it extended the distillation phase to a global audience. The compression-as-curriculum-maturation pattern (§D.3) is documented across both Iteration 3 (CEAS-sponsored, predominantly student audience) and Iteration 4 (GenAI Works partnership, global audience). The architecture survives both deliveries.

Second, it produced the corpus's most extensive recorded teaching delivery. The five day-by-day transcripts (TR-4.D1 through TR-4.D5) total approximately fifty-five thousand words, providing the analytic-reflexivity criterion (§B.3.2) with a level of contemporaneous delivery evidence that Iterations 1, 2, and 3 do not match individually.

Third, it provided the most recent anchor source for the hallucination-as-pedagogy finding (§D.2). The Day 1 transcript (TR-4.D1) carries hallucination as a teaching topic in a stabilized form, completing the finding's twenty-month evidence base across four independent sources.

Fourth, it instantiated the multi-channel teaching practice finding (§D.4) at scale. The pioneer's four-theme curriculum reached a global online audience through a non-CU delivery platform, with the dissertation chair appearing as a guest from the home institution. The multi-channel character of the pioneer practice is most fully visible in this iteration.

Section C.6 next presents the cross-iteration comparative analysis that synthesizes the four iteration narratives.